二进制安装高可用k8s集群(v1.27.2)

二进制安装高可用k8s集群(v1.27.2)

# 二进制安装高可用k8s集群(v1.27.2)

# k8s集群安装网段划分

集群安装时会涉及到三个网段:

宿主机网段:就是安装k8s的服务器所属网段。

Pod网段:Pod的网段,相当于容器的IP。

Service网段:k8s service网段,service用于集群容器通信。

一般service网段会设置为 10.96.0.0/12

Pod网段会设置成 10.244.0.0/12 或者 172.16.0.1/12

宿主机网段可能是 192.168.0.0/24

需要注意的是这三个网段不能有任何交叉。

比如如果宿主机的IP是10.105.0.x

那么service网段就不能是10.96.0.0/12,因为10.96.0.0/12网段可用IP是:

10.96.0.1 ~ 10.111.255.255

所以10.105是在这个范围之内的,属于网络交叉,此时service网段需要更换,

可以更改为192.168.0.0/16网段(注意如果service网段是192.168开头的子网掩码最好不要是12,最好为16,因为子网掩码是12他的起始IP为192.160.0.1 不是192.168.0.1)。

同样的道理,技术别的网段也不能重复。可以通过http://tools.jb51.net/aideddesign/ip_net_calc/计算:

所以一般的推荐是,直接第一个开头的就不要重复,比如你的宿主机是192开头的,那么你的service可以是10.96.0.0/12.

如果你的宿主机是10开头的,就直接把service的网段改成192.168.0.0/16

如果你的宿主机是172开头的,就直接把pod网段改成192.168.0.0/12

注意搭配,均为10网段、172网段、192网段的搭配,第一个开头数字不一样就免去了网段冲突的可能性,也可以减去计算的步骤。

不要使用带中文的服务器!

主机相关规划:

| 主机名 | IP地址 | 说明 |

|---|---|---|

| k8s-master01 ~ 03 | 192.168.7.11 ~ 13 | master节点 * 3 |

| k8s-master-lb | 192.168.7.10 | keepalived虚拟IP(VIP) |

| k8s-node01 ~ 02 | 192.168.7.14 ~ 15 | worker节点 * 2 |

参考 Rocky linux 9 初始化 (opens new window) 进行初始化操作。

| 配置信息 | 备注 |

|---|---|

| 系统版本 | Rocky Linux release 9.2 (Blue Onyx) |

| containerd版本 | 1.7.2 |

| Pod网段 | 172.16.0.0/12 |

| Service网段 | 10.96.0.0/12 |

| 宿主机网段 | 192.168.7.0/24 |

# 基本环境配置

所有节点配置hosts文件:

cat <<EOF >> /etc/hosts

192.168.7.11 k8s-master01

192.168.7.12 k8s-master02

192.168.7.13 k8s-master03

192.168.7.10 k8s-master-lb # 如果不是高可用集群,该IP为Master01的IP

192.168.7.14 k8s-node01

192.168.7.15 k8s-node02

EOF

cat /etc/hosts

2

3

4

5

6

7

8

9

安装yum源如下:

yum -y install yum-utils device-mapper-persistent-data lvm2 langpacks-en

必备工具安装:

yum -y install wget jq psmisc vim net-tools telnet yum-utils device-mapper-persistent-data lvm2 git

所有节点关闭firewalld 、dnsmasq、selinux:

systemctl disable --now firewalld

setenforce 0

sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/sysconfig/selinux

sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/selinux/config

2

3

4

所有节点关闭 swap 分区,fstab 注释 swap:

swapoff -a && sysctl -w vm.swappiness=0

sed -ri '/^[^#]*swap/s@^@#@' /etc/fstab

2

所有节点同步时间,安装 chrony:

CentOS 7 中默认使用 chrony 作为时间服务器,也支持 NTP ,需要额外安装。

NTP 与 chrony 不能同时存在,只能用其中一个,并将另一个mask掉。这里推荐使用 chrony。

yum -y install chrony

启动服务:

systemctl start chronyd # 启动服务

systemctl enable chronyd # 设置开机自启动,在 CentOS 8 和 Centos 9 中默认就是enable的

systemctl status chronyd

2

3

节点 k8s-master01 设置时间同步源为阿里源:

sed -e 's|^pool|#pool|g' \

-e '2aserver ntp.aliyun.com iburst' \

-e 's|#allow 192.168.0.0/16|allow 192.168.7.0/24|g' \

-i \

/etc/chrony.conf

systemctl restart chronyd

2

3

4

5

6

其他节点设置时间同步服务器为主节点,如果网络中有时间同步服务器,设置为对应的地址或域名即可:

sed -e 's|^pool|#pool|g' \

-e '2aserver 192.168.7.11 iburst' \

-i \

/etc/chrony.conf

systemctl restart chronyd

2

3

4

5

所有节点配置limit:

ulimit -SHn 65535

# 末尾添加如下内容

cat <<EOF >> /etc/security/limits.conf

* soft nofile 65536

* hard nofile 131072

* soft nproc 65535

* hard nproc 655350

* soft memlock unlimited

* hard memlock unlimited

EOF

2

3

4

5

6

7

8

9

10

节点 k8s-master01 免密钥登录其他节点,安装过程中生成配置文件和证书均在 k8s-master01 上操作,集群管理也在 k8s-master01 上操作,阿里云或者AWS上需要单独一台 kubectl 服务器。密钥配置如下:

cd ~

ssh-keygen -t rsa

2

k8s-master01 配置免密码登录其他节点:

for i in k8s-master01 k8s-master02 k8s-master03 k8s-node01 k8s-node02;do ssh-copy-id -i .ssh/id_rsa.pub $i;done

k8s-master01 下载安装文件:

cd /root

git clone https://github.com/dotbalo/k8s-ha-install.git

2

如果无法clone可以使用 k8s-ha-install (opens new window) 进行克隆。

所有节点升级系统并重启:

yum update -y --exclude=kernel* && reboot # CentOS7 需要升级,CentOS 8 和 Centos 9 可以按需升级系统

所有节点安装 ipvsadm:

yum -y install ipvsadm ipset sysstat conntrack libseccomp

所有节点配置 ipvs 模块:

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack

# 新建 /etc/modules-load.d/ipvs.conf 并加入以下内容

cat <<EOF > /etc/modules-load.d/ipvs.conf

ip_vs

ip_vs_lc

ip_vs_wlc

ip_vs_rr

ip_vs_wrr

ip_vs_lblc

ip_vs_lblcr

ip_vs_dh

ip_vs_sh

ip_vs_fo

ip_vs_nq

ip_vs_sed

ip_vs_ftp

ip_vs_sh

nf_conntrack

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_REJECT

ipip

EOF

systemctl enable --now systemd-modules-load.service

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

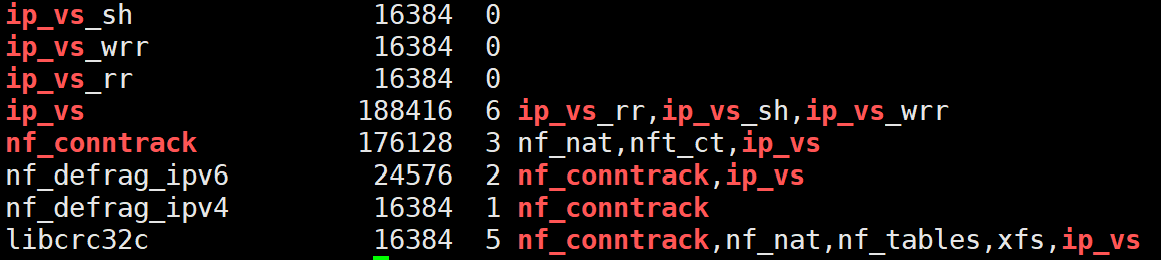

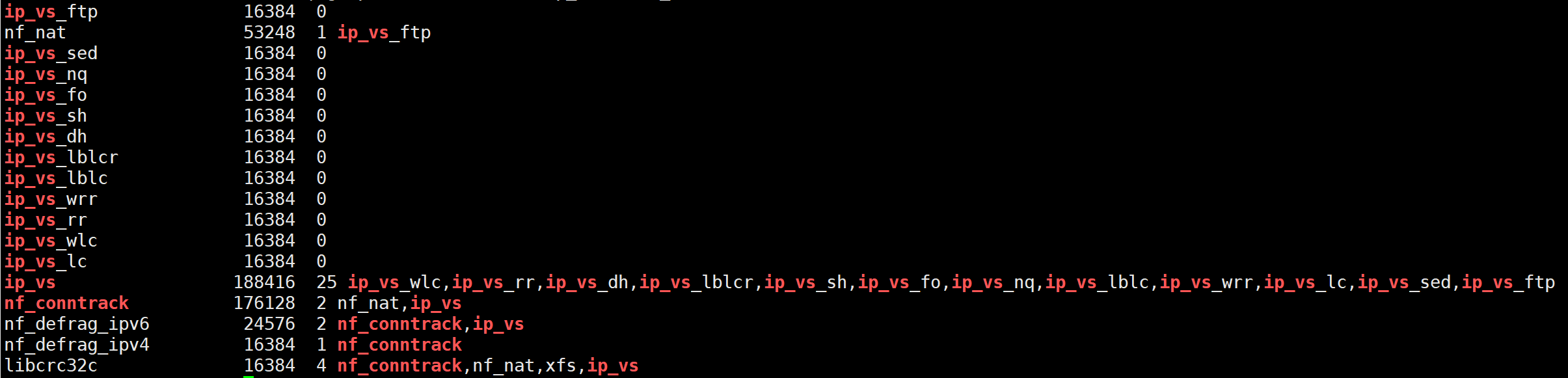

检查是否加载:

lsmod | grep -e ip_vs -e nf_conntrack

开启一些k8s集群中必须的内核参数,所有节点配置k8s内核:

cat <<EOF > /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

fs.may_detach_mounts = 1

vm.overcommit_memory=1

net.ipv4.conf.all.route_localnet = 1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

EOF

sysctl --system

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

所有节点配置完内核后,重启服务器,保证重启后内核依旧加载:

shutdown -r now

lsmod | grep --color=auto -e ip_vs -e nf_conntrack

2

# 基本组件安装

# Containerd

所有节点安装 containerd :

# 先卸载掉其他无关的插件

yum -y remove podman docker containerd

yum -y erase podman buildah

# 下载 containerd runc cni-plugins containerd.service

wget -O /opt https://github.com/containerd/containerd/releases/download/v1.7.2/containerd-1.7.2-linux-amd64.tar.gz

wget -O /opt https://github.com/containernetworking/plugins/releases/download/v1.3.0/cni-plugins-linux-amd64-v1.3.0.tgz

wget -O /opt https://github.com/opencontainers/runc/releases/download/v1.1.7/runc.amd64

wget -O /opt https://raw.githubusercontent.com/containerd/containerd/main/containerd.service

# 安装 containerd

tar Cxzvf /usr/local /opt/containerd-1.7.2-linux-amd64.tar.gz

install -m 755 /opt/runc.amd64 /usr/local/sbin/runc

mkdir -p /opt/cni/bin

tar Cxzvf /opt/cni/bin /opt/cni-plugins-linux-amd64-v1.3.0.tgz

# 通过 systemd 启动 containerd

mkdir -p /usr/local/lib/systemd/system

cp containerd.service /usr/local/lib/systemd/system/containerd.service

systemctl daemon-reload

systemctl enable --now containerd

systemctl status containerd

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

# crictl

所有节点安装 crictl :

wget -O /opt https://github.com/kubernetes-sigs/cri-tools/releases/download/v1.27.0/crictl-v1.27.0-linux-amd64.tar.gz

tar Czxvf /usr/local/bin /opt/crictl-v1.27.0-linux-amd64.tar.gz

crictl -version

2

3

所有节点配置 Containerd 所需的模块:

cat <<EOF | sudo tee /etc/modules-load.d/containerd.conf

overlay

br_netfilter

EOF

2

3

4

所有节点加载模块:

modprobe -- overlay

modprobe -- br_netfilter

2

所有节点配置 Containerd 所需的内核:

cat <<EOF | sudo tee /etc/sysctl.d/99-kubernetes-cri.conf

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

EOF

2

3

4

5

所有节点加载内核:

sysctl --system

所有节点配置 Containerd 的配置文件:

mkdir -p /etc/containerd

containerd config default | tee /etc/containerd/config.toml

2

所有节点将 Containerd 的 Cgroup 改为 Systemd:

sed -i 's|SystemdCgroup = false|SystemdCgroup = true|g' /etc/containerd/config.toml

所有节点修改 sandbox_image 的值:

配置文件中有个默认的 sandbox_image = "registry.k8s.io/pause:3.8",因为网络原因,理论上这个镜像是无法拉取的,但是配置了阿里云仓库就可以拉取这个镜像。所以需要手动修改 sandbox_image 的值,确保这个镜像可以拉取。

sed -i.bak '/sandbox_image / s/= "[^"][^"]*"/= "registry.cn-hangzhou.aliyuncs.com\/google_containers\/pause:3.8"/' /etc/containerd/config.toml

所有节点启动 Containerd,并配置开机自启动:

systemctl daemon-reload

systemctl enable --now containerd

systemctl restart containerd

2

3

所有节点配置 crictl 客户端连接的运行时位置:

cat <<EOF > /etc/crictl.yaml

runtime-endpoint: unix:///run/containerd/containerd.sock

image-endpoint: unix:///run/containerd/containerd.sock

timeout: 10

debug: false

EOF

2

3

4

5

6

# K8s及etcd安装

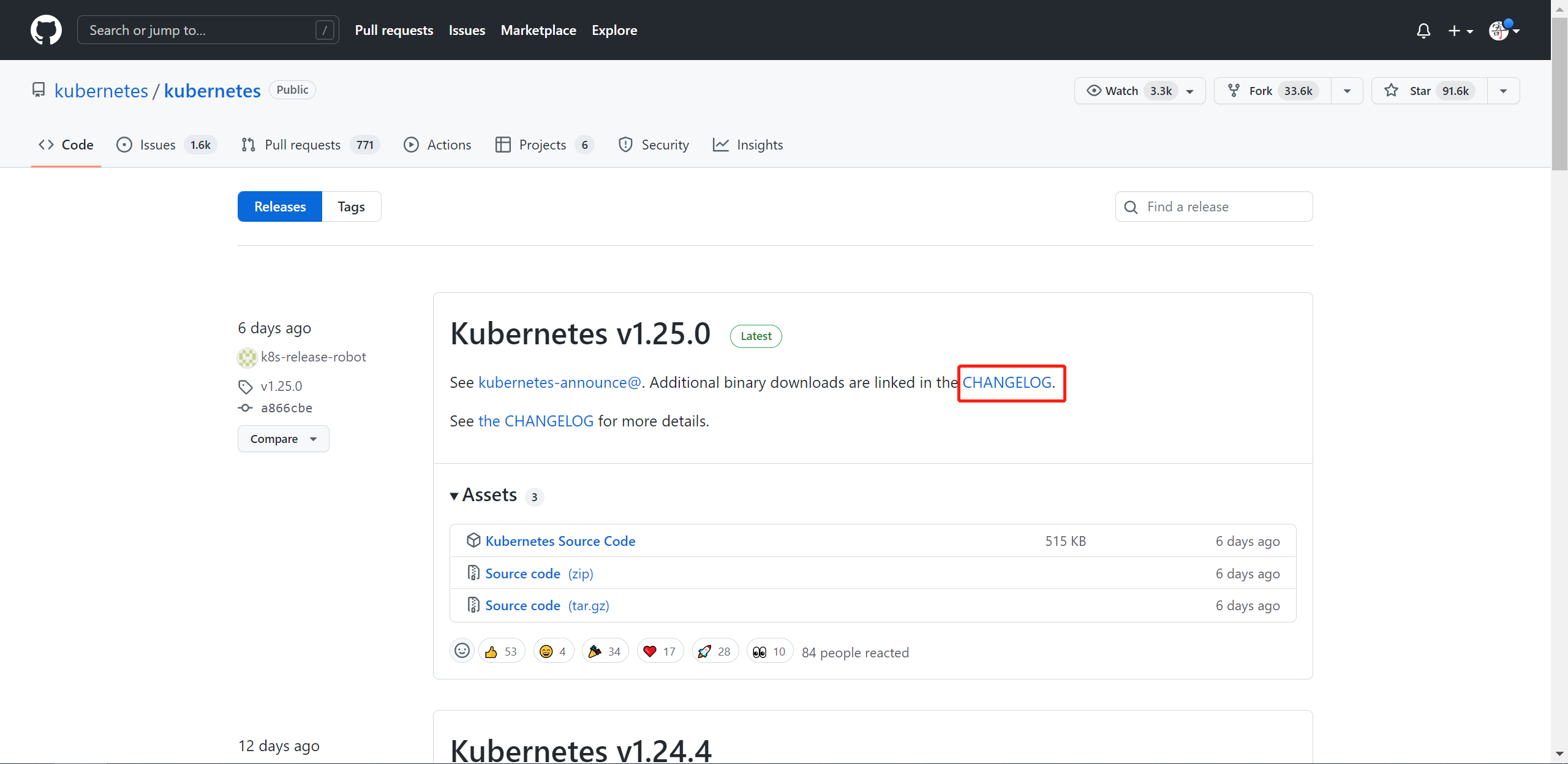

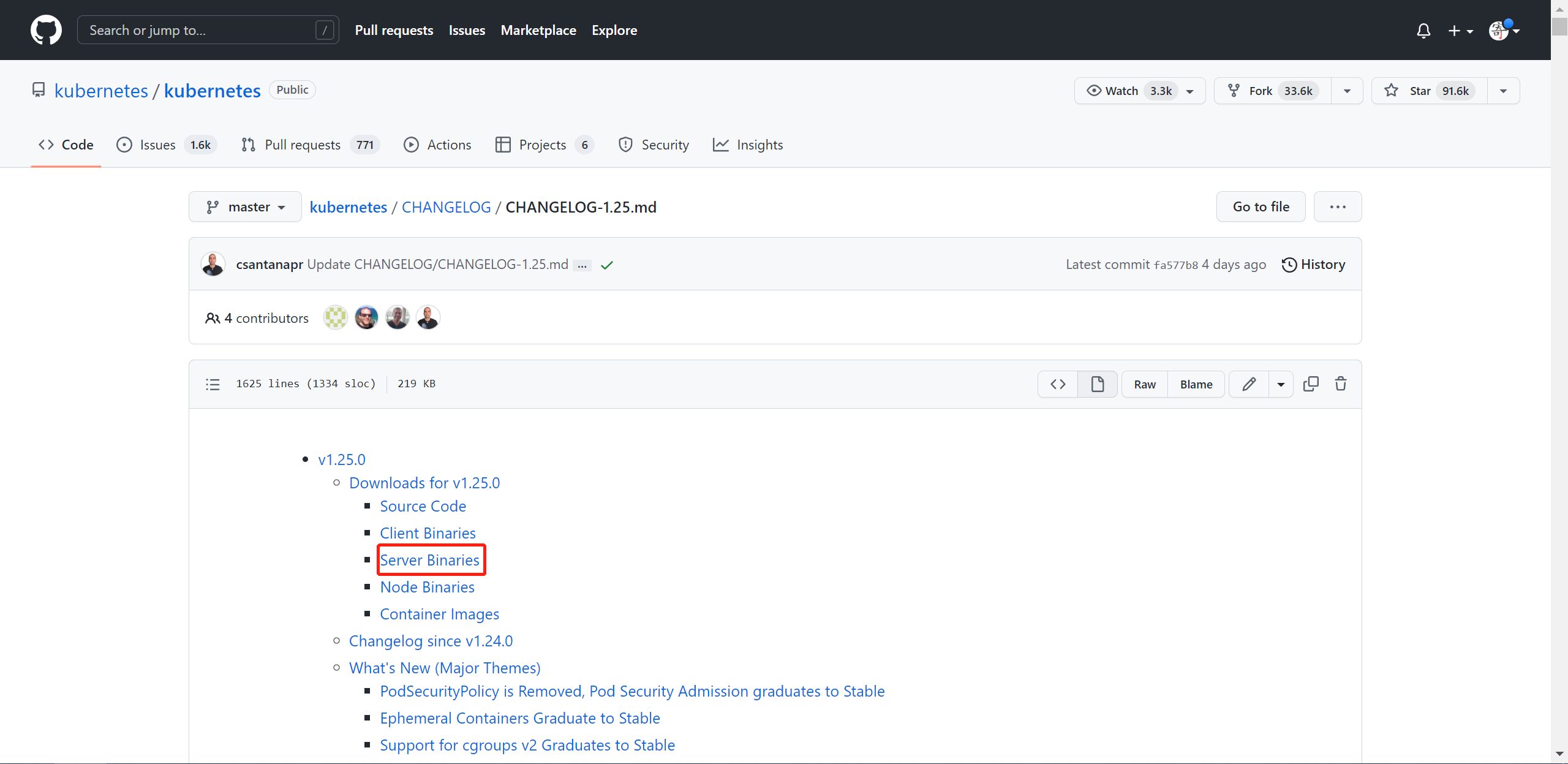

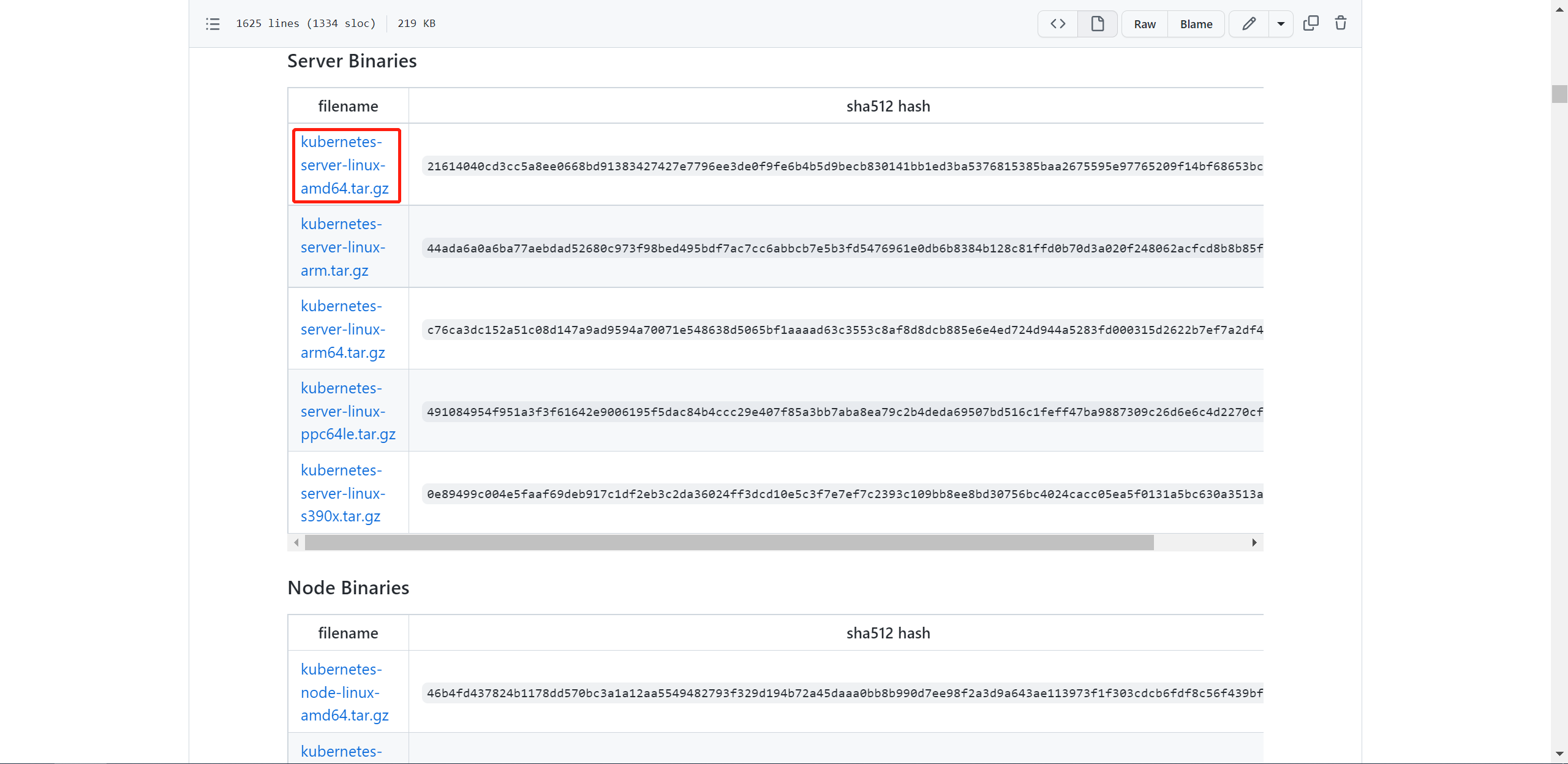

版本根据 kubernetes/kubernetes/releases (opens new window) 官方发布的版本去下载。

选择对应版本,进入 CHANGELOG

选择 Server Binaries

根据系统版本选择安装包即可

同样的操作,etcd 也选择对应的版本去下载即可。etcd-io/etcd/releases (opens new window)

k8s-master01 下载 kubernetes, etcd 安装包:

wget https://dl.k8s.io/v1.27.2/kubernetes-server-linux-amd64.tar.gz

wget https://github.com/etcd-io/etcd/releases/download/v3.4.26/etcd-v3.4.26-linux-amd64.tar.gz

2

k8s-master01 解压 kubernetes 安装文件,etcd 安装文件:

tar -xf kubernetes-server-linux-amd64.tar.gz --strip-components=3 -C /usr/local/bin kubernetes/server/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy}

tar -zxvf etcd-v3.5.9-linux-amd64.tar.gz --strip-components=1 -C /usr/local/bin etcd-v3.5.9-linux-amd64/etcd{,ctl}

2

版本验证:

kubelet --version

etcdctl version

2

将组件发送到其他节点:

MasterNodes='k8s-master02 k8s-master03'

WorkNodes='k8s-node01 k8s-node02'

for NODE in $MasterNodes; do

echo $NODE

scp /usr/local/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy} $NODE:/usr/local/bin/

scp /usr/local/bin/etcd* $NODE:/usr/local/bin/

done

for NODE in $WorkNodes; do scp /usr/local/bin/kube{let,-proxy} $NODE:/usr/local/bin/; done

2

3

4

5

6

7

8

所有节点创建 /opt/cni/bin 目录:

mkdir -p /opt/cni/bin

k8s-master01 切换分支到对应的版本:

cd /root/k8s-ha-install && git checkout manual-installation-v1.26.x

# 生成证书

二进制安装最关键步骤,一步错误全盘皆输,一定要注意每个步骤都要是正确的!

k8s-master01 下载生成证书工具:

cloudflare/cfssl/releases (opens new window) 官方下载地址,选取最新版进行下载。

wget "https://github.com/cloudflare/cfssl/releases/download/v1.6.4/cfssl_1.6.4_linux_amd64" -O /usr/local/bin/cfssl

wget "https://github.com/cloudflare/cfssl/releases/download/v1.6.4/cfssljson_1.6.4_linux_amd64" -O /usr/local/bin/cfssljson

chmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson

2

3

# etcd证书

所有 Master 节点创建 etcd 证书目录:

mkdir /etc/etcd/ssl -p

所有节点创建 kubernetes 相关目录:

mkdir -p /etc/kubernetes/pki

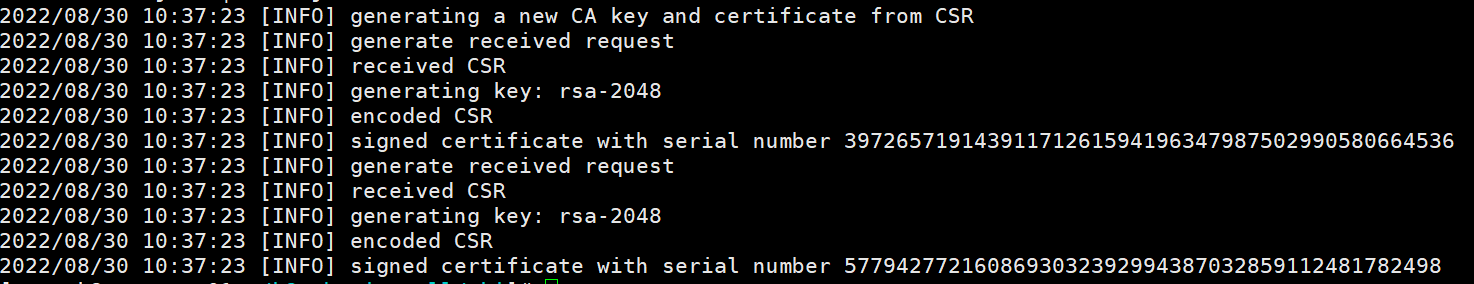

k8s-master01 生成 etcd 证书:

cd /root/k8s-ha-install/pki

# 生成etcd CA证书和CA证书的key

cfssl gencert -initca etcd-ca-csr.json | cfssljson -bare /etc/etcd/ssl/etcd-ca

cfssl gencert \

-ca=/etc/etcd/ssl/etcd-ca.pem \

-ca-key=/etc/etcd/ssl/etcd-ca-key.pem \

-config=ca-config.json \

-hostname=127.0.0.1,k8s-master01,k8s-master02,k8s-master03,192.168.7.11,192.168.7.12,192.168.7.13 \

-profile=kubernetes \

etcd-csr.json | cfssljson -bare /etc/etcd/ssl/etcd

2

3

4

5

6

7

8

9

10

将证书复制到其他节点:

MasterNodes='k8s-master02 k8s-master03'

WorkNodes='k8s-node01 k8s-node02'

for NODE in $MasterNodes; do

ssh $NODE "mkdir -p /etc/etcd/ssl"

for FILE in etcd-ca-key.pem etcd-ca.pem etcd-key.pem etcd.pem; do

scp /etc/etcd/ssl/${FILE} $NODE:/etc/etcd/ssl/${FILE}

done

done

2

3

4

5

6

7

8

9

# k8s组件证书

k8s-master01 生成 kubernetes 证书:

cd /root/k8s-ha-install/pki

cfssl gencert -initca ca-csr.json | cfssljson -bare /etc/kubernetes/pki/ca

2

10.96.0. 是 k8s service 的网段,如果说需要更改 k8s service 网段,那就需要更改 10.96.0.1。

如果不是高可用集群,192.168.7.10 为 k8s-master01 的IP,否则为 keepalived 虚拟IP的IP地址。

cfssl gencert -ca=/etc/kubernetes/pki/ca.pem -ca-key=/etc/kubernetes/pki/ca-key.pem -config=ca-config.json -hostname=10.96.0.1,192.168.7.10,127.0.0.1,kubernetes,kubernetes.default,kubernetes.default.svc,kubernetes.default.svc.cluster,kubernetes.default.svc.cluster.local,192.168.7.11,192.168.7.12,192.168.7.13 -profile=kubernetes apiserver-csr.json | cfssljson -bare /etc/kubernetes/pki/apiserver

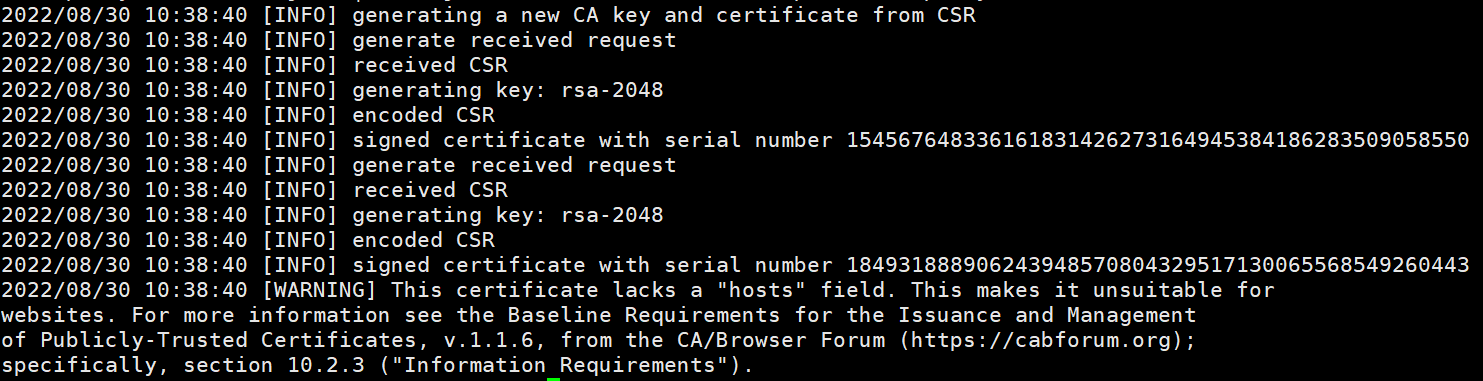

生成 apiserver 的聚合证书。Requestheader-client-xxx requestheader-allowwd-xxx:aggerator:

cfssl gencert -initca front-proxy-ca-csr.json | cfssljson -bare /etc/kubernetes/pki/front-proxy-ca

cfssl gencert -ca=/etc/kubernetes/pki/front-proxy-ca.pem -ca-key=/etc/kubernetes/pki/front-proxy-ca-key.pem -config=ca-config.json -profile=kubernetes front-proxy-client-csr.json | cfssljson -bare /etc/kubernetes/pki/front-proxy-client

2

返回结果:

生成 controller-manager 的证书:

cfssl gencert \

-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \

-config=ca-config.json \

-profile=kubernetes \

manager-csr.json | cfssljson -bare /etc/kubernetes/pki/controller-manager

# 注意,如果不是高可用集群,192.168.7.10:8443改为master01的地址,8443改为apiserver的端口,默认是6443

# set-cluster:设置一个集群项,

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=https://192.168.7.10:8443 \

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

# 设置一个环境项,一个上下文

kubectl config set-context system:kube-controller-manager@kubernetes \

--cluster=kubernetes \

--user=system:kube-controller-manager \

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

# set-credentials 设置一个用户项

kubectl config set-credentials system:kube-controller-manager \

--client-certificate=/etc/kubernetes/pki/controller-manager.pem \

--client-key=/etc/kubernetes/pki/controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

# 使用某个环境当做默认环境

kubectl config use-context system:kube-controller-manager@kubernetes \

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

cfssl gencert \

-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \

-config=ca-config.json \

-profile=kubernetes \

scheduler-csr.json | cfssljson -bare /etc/kubernetes/pki/scheduler

# 注意,如果不是高可用集群,192.168.7.10:8443改为master01的地址,8443改为apiserver的端口,默认是6443

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=https://192.168.7.10:8443 \

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

kubectl config set-credentials system:kube-scheduler \

--client-certificate=/etc/kubernetes/pki/scheduler.pem \

--client-key=/etc/kubernetes/pki/scheduler-key.pem \

--embed-certs=true \

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

kubectl config set-context system:kube-scheduler@kubernetes \

--cluster=kubernetes \

--user=system:kube-scheduler \

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

kubectl config use-context system:kube-scheduler@kubernetes \

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

cfssl gencert \

-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \

-config=ca-config.json \

-profile=kubernetes \

admin-csr.json | cfssljson -bare /etc/kubernetes/pki/admin

# 注意,如果不是高可用集群,192.168.7.10:8443改为master01的地址,8443改为apiserver的端口,默认是6443

kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/pki/ca.pem --embed-certs=true --server=https://192.168.7.10:8443 --kubeconfig=/etc/kubernetes/admin.kubeconfig

kubectl config set-credentials kubernetes-admin --client-certificate=/etc/kubernetes/pki/admin.pem --client-key=/etc/kubernetes/pki/admin-key.pem --embed-certs=true --kubeconfig=/etc/kubernetes/admin.kubeconfig

kubectl config set-context kubernetes-admin@kubernetes --cluster=kubernetes --user=kubernetes-admin --kubeconfig=/etc/kubernetes/admin.kubeconfig

kubectl config use-context kubernetes-admin@kubernetes --kubeconfig=/etc/kubernetes/admin.kubeconfig

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

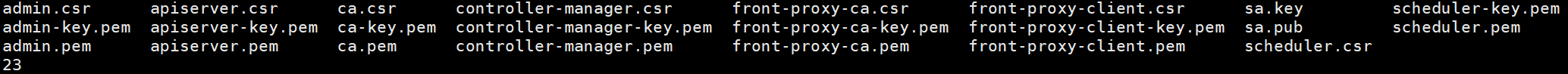

创建 ServiceAccount Key --> secret :

openssl genrsa -out /etc/kubernetes/pki/sa.key 2048

openssl rsa -in /etc/kubernetes/pki/sa.key -pubout -out /etc/kubernetes/pki/sa.pub

2

发送证书至其他节点:

for NODE in k8s-master02 k8s-master03; do

for FILE in $(ls /etc/kubernetes/pki | grep -v etcd); do

scp /etc/kubernetes/pki/${FILE} $NODE:/etc/kubernetes/pki/${FILE}

done

for FILE in admin.kubeconfig controller-manager.kubeconfig scheduler.kubeconfig; do

scp /etc/kubernetes/${FILE} $NODE:/etc/kubernetes/${FILE}

done

done

2

3

4

5

6

7

8

查看证书文件:

ls /etc/kubernetes/pki/

ls /etc/kubernetes/pki/ |wc -l

2

# Kubernetes系统组件配置

# etcd配置

etcd 配置大致相同,注意修改每个 Master 节点的 etcd 配置的主机名和IP地址。

# k8s-master01

cat <<EOF > /etc/etcd/etcd.config.yml

name: 'k8s-master01'

data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://192.168.7.11:2380'

listen-client-urls: 'https://192.168.7.11:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://192.168.7.11:2380'

advertise-client-urls: 'https://192.168.7.11:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'k8s-master01=https://192.168.7.11:2380,k8s-master02=https://192.168.7.12:2380,k8s-master03=https://192.168.7.13:2380'

initial-cluster-token: 'etcd-k8s-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'

key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

client-cert-auth: true

trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'

auto-tls: true

peer-transport-security:

cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'

key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

peer-client-cert-auth: true

trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'

auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

EOF

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

# k8s-master02

cat <<EOF > /etc/etcd/etcd.config.yml

name: 'k8s-master02'

data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://192.168.7.12:2380'

listen-client-urls: 'https://192.168.7.12:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://192.168.7.12:2380'

advertise-client-urls: 'https://192.168.7.12:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'k8s-master01=https://192.168.7.11:2380,k8s-master02=https://192.168.7.12:2380,k8s-master03=https://192.168.7.13:2380'

initial-cluster-token: 'etcd-k8s-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'

key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

client-cert-auth: true

trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'

auto-tls: true

peer-transport-security:

cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'

key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

peer-client-cert-auth: true

trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'

auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

EOF

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

# k8s-master03

cat <<EOF > /etc/etcd/etcd.config.yml

name: 'k8s-master03'

data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://192.168.7.13:2380'

listen-client-urls: 'https://192.168.7.13:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://192.168.7.13:2380'

advertise-client-urls: 'https://192.168.7.13:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'k8s-master01=https://192.168.7.11:2380,k8s-master02=https://192.168.7.12:2380,k8s-master03=https://192.168.7.13:2380'

initial-cluster-token: 'etcd-k8s-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'

key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

client-cert-auth: true

trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'

auto-tls: true

peer-transport-security:

cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'

key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

peer-client-cert-auth: true

trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'

auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

EOF

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

# 创建Service

所有 Master 节点创建 etcd service 并启动:

cat <<EOF > /usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Service

Documentation=https://coreos.com/etcd/docs/latest/

After=network.target

[Service]

Type=notify

ExecStart=/usr/local/bin/etcd --config-file=/etc/etcd/etcd.config.yml

Restart=on-failure

RestartSec=10

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

Alias=etcd3.service

EOF

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

所有 Master 节点创建 etcd 的证书目录:

mkdir /etc/kubernetes/pki/etcd

ln -s /etc/etcd/ssl/* /etc/kubernetes/pki/etcd/

systemctl daemon-reload

systemctl enable --now etcd

systemctl status etcd

2

3

4

5

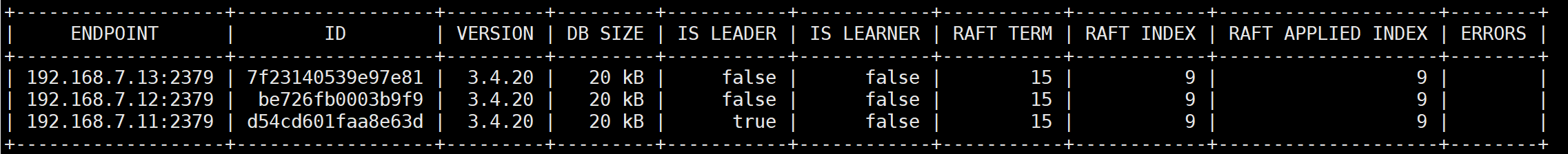

查看 etcd 状态:

export ETCDCTL_API=3

etcdctl --endpoints="192.168.7.13:2379,192.168.7.12:2379,192.168.7.11:2379" --cacert=/etc/kubernetes/pki/etcd/etcd-ca.pem --cert=/etc/kubernetes/pki/etcd/etcd.pem --key=/etc/kubernetes/pki/etcd/etcd-key.pem endpoint status --write-out=table

2

# 高可用配置

公有云要用公有云自带的负载均衡,比如阿里云的SLB,腾讯云的ELB,用来替代 haproxy和 keepalived,因为公有云大部分都是不支持 keepalived 的,另外如果用阿里云的话,kubectl 控制端不能放在master节点,推荐使用腾讯云,因为阿里云的 slb 有回环的问题,也就是 slb 代理的服务器不能反向访问 slb ,但是腾讯云修复了这个问题?

保留态度,目前并未使用云上资源。故无法验证。

所有 Master 节点安装 keepalived 和 haproxy:

yum -y install keepalived haproxy

# HAProxy配置

所有 Master 节点配置 HAProxy ,配置一样:

cat <<EOF > /etc/haproxy/haproxy.cfg

global

maxconn 2000

ulimit-n 16384

log 127.0.0.1 local0 err

stats timeout 30s

defaults

log global

mode http

option httplog

timeout connect 5000

timeout client 50000

timeout server 50000

timeout http-request 15s

timeout http-keep-alive 15s

frontend k8s-master

bind 0.0.0.0:8443

bind 127.0.0.1:8443

mode tcp

option tcplog

tcp-request inspect-delay 5s

default_backend k8s-master

backend k8s-master

mode tcp

option tcplog

option tcp-check

balance roundrobin

default-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

server k8s-master01 192.168.7.11:6443 check

server k8s-master02 192.168.7.12:6443 check

server k8s-master03 192.168.7.13:6443 check

EOF

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

# Master01 keepalived

cat <<EOF > /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 5

weight -5

fall 2

rise 1

}

vrrp_instance VI_1 {

state MASTER

interface eth0

mcast_src_ip 192.168.7.11

virtual_router_id 51

priority 101

nopreempt

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

192.168.7.10

}

track_script {

chk_apiserver

} }

EOF

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

# Master02 keepalived

cat <<EOF > /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 5

weight -5

fall 2

rise 1

}

vrrp_instance VI_1 {

state BACKUP

interface eth0

mcast_src_ip 192.168.7.12

virtual_router_id 51

priority 100

nopreempt

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

192.168.7.10

}

track_script {

chk_apiserver

} }

EOF

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

# Master03 keepalived

cat <<EOF > /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 5

weight -5

fall 2

rise 1

}

vrrp_instance VI_1 {

state BACKUP

interface eth0

mcast_src_ip 192.168.7.13

virtual_router_id 51

priority 100

nopreempt

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

192.168.7.10

}

track_script {

chk_apiserver

} }

EOF

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

# 健康检查配置

所有 Master 节点配置 健康检查 ,配置一样:

cat <<EOF > /etc/keepalived/check_apiserver.sh

#!/bin/bash

err=0

for k in $(seq 1 3); do

check_code=$(pgrep haproxy)

if [[ $check_code == "" ]]; then

err=$(expr $err + 1)

sleep 1

continue

else

err=0

break

fi

done

if [[ $err != "0" ]]; then

echo "systemctl stop keepalived"

/usr/bin/systemctl stop keepalived

exit 1

else

exit 0

fi

EOF

chmod +x /etc/keepalived/check_apiserver.sh

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

所有 master 节点启动 haproxy 和 keepalived :

systemctl daemon-reload

systemctl enable --now haproxy

systemctl enable --now keepalived

systemctl status keepalived

2

3

4

VIP 验证:

ping 192.168.7.10 -c 10

telnet 192.168.7.10 8443

2

如果 ping 不通且 telnet没有出现 ],则认为VIP不正常,不可在继续往下执行安装步骤,需要排查 keepalived 的问题,比如防火墙和 selinux , haproxy 和 keepalived的状态,监听端口等。

# Kubernetes组件配置

所有节点创建相关目录:

mkdir -p /etc/kubernetes/manifests/ /etc/systemd/system/kubelet.service.d /var/lib/kubelet /var/log/kubernetes

# Apiserver

注意:如果不是高可用集群, 192.168.7.10 改为 k8s-master01 的地址。

注意:本文档使用的 k8s service 网段为 10.96.0.0/12,该网段不能和宿主机的网段、Pod网段的重复,请按需修改。

所有 Master 节点创建 kube-apiserver service:

# Master01 配置

cat <<EOF > /usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \\

--v=2 \\

--allow-privileged=true \\

--bind-address=0.0.0.0 \\

--secure-port=6443 \\

--advertise-address=192.168.7.11 \\

--service-cluster-ip-range=10.96.0.0/12 \\

--service-node-port-range=30000-32767 \\

--etcd-servers=https://192.168.7.11:2379,https://192.168.7.12:2379,https://192.168.7.13:2379 \\

--etcd-cafile=/etc/etcd/ssl/etcd-ca.pem \\

--etcd-certfile=/etc/etcd/ssl/etcd.pem \\

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \\

--client-ca-file=/etc/kubernetes/pki/ca.pem \\

--tls-cert-file=/etc/kubernetes/pki/apiserver.pem \\

--tls-private-key-file=/etc/kubernetes/pki/apiserver-key.pem \\

--kubelet-client-certificate=/etc/kubernetes/pki/apiserver.pem \\

--kubelet-client-key=/etc/kubernetes/pki/apiserver-key.pem \\

--service-account-key-file=/etc/kubernetes/pki/sa.pub \\

--service-account-signing-key-file=/etc/kubernetes/pki/sa.key \\

--service-account-issuer=https://kubernetes.default.svc.cluster.local \\

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \\

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \\

--authorization-mode=Node,RBAC \\

--enable-bootstrap-token-auth=true \\

--requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \\

--proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.pem \\

--proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client-key.pem \\

--requestheader-allowed-names=aggregator \\

--requestheader-group-headers=X-Remote-Group \\

--requestheader-extra-headers-prefix=X-Remote-Extra- \\

--requestheader-username-headers=X-Remote-User

# --token-auth-file=/etc/kubernetes/token.csv

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

EOF

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

# Master02 配置

cat <<EOF > /usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \\

--v=2 \\

--allow-privileged=true \\

--bind-address=0.0.0.0 \\

--secure-port=6443 \\

--advertise-address=192.168.7.12 \\

--service-cluster-ip-range=10.96.0.0/12 \\

--service-node-port-range=30000-32767 \\

--etcd-servers=https://192.168.7.11:2379,https://192.168.7.12:2379,https://192.168.7.13:2379 \\

--etcd-cafile=/etc/etcd/ssl/etcd-ca.pem \\

--etcd-certfile=/etc/etcd/ssl/etcd.pem \\

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \\

--client-ca-file=/etc/kubernetes/pki/ca.pem \\

--tls-cert-file=/etc/kubernetes/pki/apiserver.pem \\

--tls-private-key-file=/etc/kubernetes/pki/apiserver-key.pem \\

--kubelet-client-certificate=/etc/kubernetes/pki/apiserver.pem \\

--kubelet-client-key=/etc/kubernetes/pki/apiserver-key.pem \\

--service-account-key-file=/etc/kubernetes/pki/sa.pub \\

--service-account-signing-key-file=/etc/kubernetes/pki/sa.key \\

--service-account-issuer=https://kubernetes.default.svc.cluster.local \\

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \\

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \\

--authorization-mode=Node,RBAC \\

--enable-bootstrap-token-auth=true \\

--requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \\

--proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.pem \\

--proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client-key.pem \\

--requestheader-allowed-names=aggregator \\

--requestheader-group-headers=X-Remote-Group \\

--requestheader-extra-headers-prefix=X-Remote-Extra- \\

--requestheader-username-headers=X-Remote-User

# --token-auth-file=/etc/kubernetes/token.csv

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

EOF

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

# Master03 配置

cat <<EOF > /usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \\

--v=2 \\

--allow-privileged=true \\

--bind-address=0.0.0.0 \\

--secure-port=6443 \\

--advertise-address=192.168.7.13 \\

--service-cluster-ip-range=10.96.0.0/12 \\

--service-node-port-range=30000-32767 \\

--etcd-servers=https://192.168.7.11:2379,https://192.168.7.12:2379,https://192.168.7.13:2379 \\

--etcd-cafile=/etc/etcd/ssl/etcd-ca.pem \\

--etcd-certfile=/etc/etcd/ssl/etcd.pem \\

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \\

--client-ca-file=/etc/kubernetes/pki/ca.pem \\

--tls-cert-file=/etc/kubernetes/pki/apiserver.pem \\

--tls-private-key-file=/etc/kubernetes/pki/apiserver-key.pem \\

--kubelet-client-certificate=/etc/kubernetes/pki/apiserver.pem \\

--kubelet-client-key=/etc/kubernetes/pki/apiserver-key.pem \\

--service-account-key-file=/etc/kubernetes/pki/sa.pub \\

--service-account-signing-key-file=/etc/kubernetes/pki/sa.key \\

--service-account-issuer=https://kubernetes.default.svc.cluster.local \\

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \\

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \\

--authorization-mode=Node,RBAC \\

--enable-bootstrap-token-auth=true \\

--requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \\

--proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.pem \\

--proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client-key.pem \\

--requestheader-allowed-names=aggregator \\

--requestheader-group-headers=X-Remote-Group \\

--requestheader-extra-headers-prefix=X-Remote-Extra- \\

--requestheader-username-headers=X-Remote-User

# --token-auth-file=/etc/kubernetes/token.csv

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

EOF

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

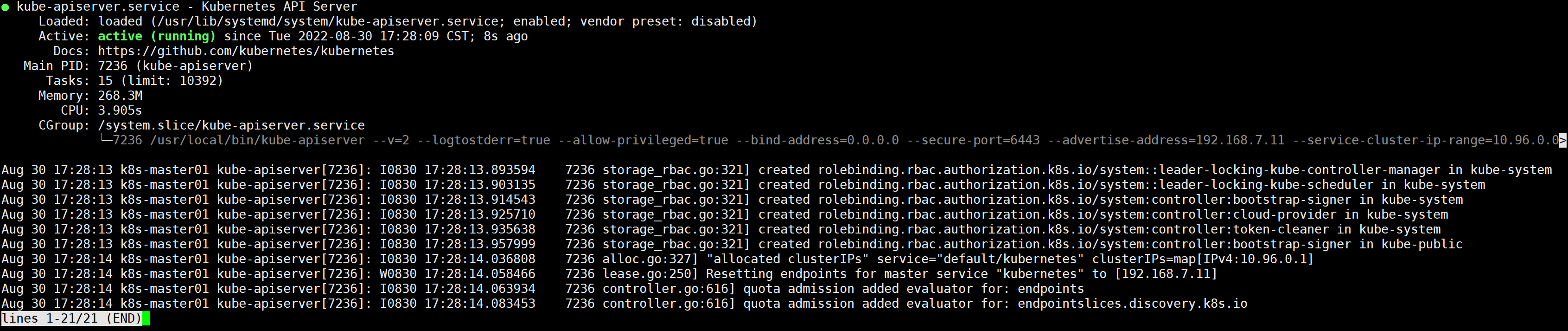

# 启动 Apiserver

所有 Master 节点开启 kube-apiserver:

systemctl daemon-reload && systemctl enable --now kube-apiserver

检测 kube-server 状态:

systemctl status kube-apiserver

# ControllerManager

注意本文档使用的k8s Pod网段为 172.16.0.0/12 ,该网段不能和宿主机网段、k8s Service网段重复,请按需修改。

所有 Master 节点配置 kube-controller-manager service:

cat <<EOF > /usr/lib/systemd/system/kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-controller-manager \\

--v=2 \\

--root-ca-file=/etc/kubernetes/pki/ca.pem \\

--cluster-signing-cert-file=/etc/kubernetes/pki/ca.pem \\

--cluster-signing-key-file=/etc/kubernetes/pki/ca-key.pem \\

--service-account-private-key-file=/etc/kubernetes/pki/sa.key \\

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig \\

--leader-elect=true \\

--use-service-account-credentials=true \\

--node-monitor-grace-period=40s \\

--node-monitor-period=5s \\

--controllers=*,bootstrapsigner,tokencleaner \\

--allocate-node-cidrs=true \\

--cluster-cidr=172.16.0.0/12 \\

--requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \\

--node-cidr-mask-size=24

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

EOF

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

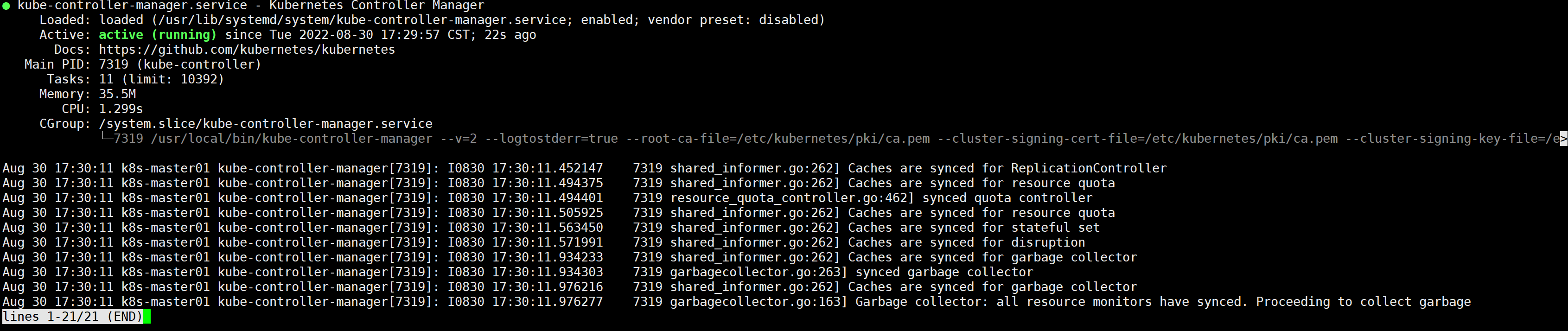

所有 Master 节点启动 kube-controller-manager:

systemctl daemon-reload

systemctl enable --now kube-controller-manager

systemctl status kube-controller-manager

2

3

# Scheduler

所有 Master 节点配置 kube-scheduler service:

cat <<EOF > /usr/lib/systemd/system/kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-scheduler \\

--v=2 \\

--leader-elect=true \\

--authentication-kubeconfig=/etc/kubernetes/scheduler.kubeconfig \\

--authorization-kubeconfig=/etc/kubernetes/scheduler.kubeconfig \\

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

EOF

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

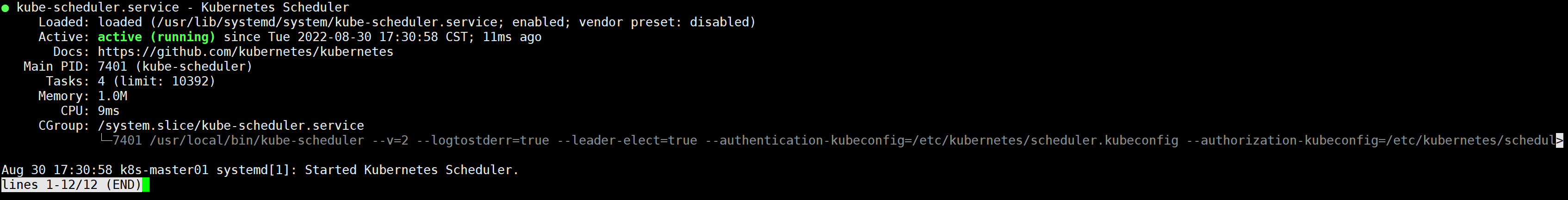

所有 Master 节点启动 kube-scheduler:

systemctl daemon-reload

systemctl enable --now kube-scheduler

systemctl status kube-scheduler

2

3

# TLS Bootstrapping 配置

注意:如果不是高可用集群,192.168.7.10:8443改为 master01 的地址,8443改为 apiserver 的端口,默认是6443。

k8s-master01 节点创建 bootstrap:

cd /root/k8s-ha-install/bootstrap

kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/pki/ca.pem --embed-certs=true --server=https://192.168.7.10:8443 --kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig

kubectl config set-credentials tls-bootstrap-token-user --token=c8ad9c.2e4d610cf3e7426e --kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig

kubectl config set-context tls-bootstrap-token-user@kubernetes --cluster=kubernetes --user=tls-bootstrap-token-user --kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig

kubectl config use-context tls-bootstrap-token-user@kubernetes --kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig

2

3

4

5

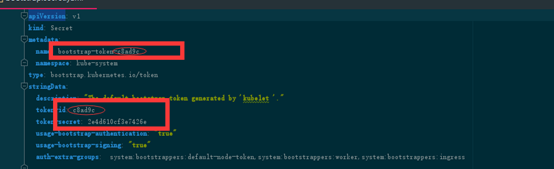

注意:如果要修改bootstrap.secret.yaml的token-id和token-secret,需要保证下图红圈内的字符串一致的,并且位数是一样的。还要保证上个命令的黄色字体:c8ad9c.2e4d610cf3e7426e与你修改的字符串要一致

所有master节点拷贝config 配置文件:

mkdir -p /root/.kube; cp /etc/kubernetes/admin.kubeconfig /root/.kube/config

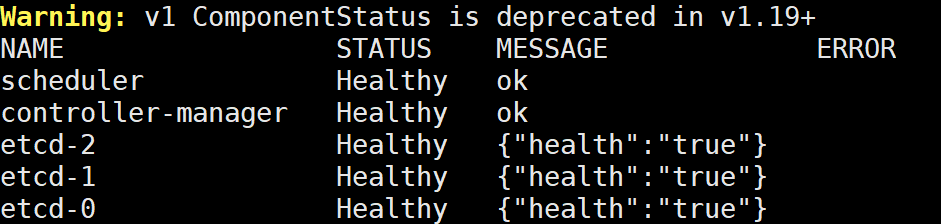

查询集群状态:

查询集群状态正常才可以继续往下,否则需要排查k8s组件是否有故障。

kubectl get cs

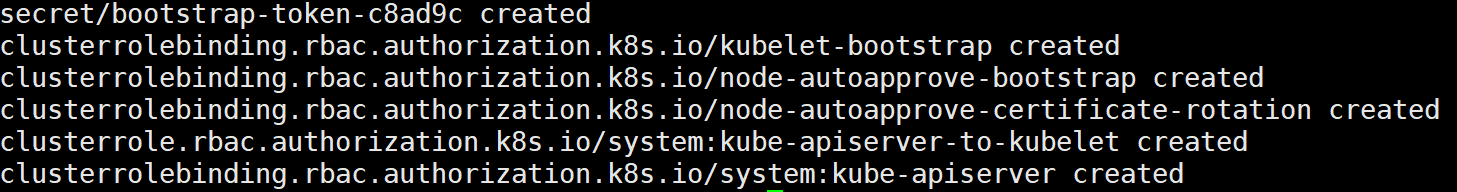

创建 bootstrap :

kubectl create -f bootstrap.secret.yaml

# Node节点配置

# 复制证书

k8s-master01 节点复制证书到 node 节点:

cd /etc/kubernetes/

for NODE in k8s-master02 k8s-master03 k8s-node01 k8s-node02; do

ssh $NODE mkdir -p /etc/kubernetes/pki /etc/etcd/ssl /etc/etcd/ssl

for FILE in etcd-ca.pem etcd.pem etcd-key.pem; do

scp /etc/etcd/ssl/$FILE $NODE:/etc/etcd/ssl/

done

for FILE in pki/ca.pem pki/ca-key.pem pki/front-proxy-ca.pem bootstrap-kubelet.kubeconfig; do

scp /etc/kubernetes/$FILE $NODE:/etc/kubernetes/${FILE}

done

done

2

3

4

5

6

7

8

9

10

# Kubelet 配置

所有节点创建相关目录:

mkdir -p /var/lib/kubelet /var/log/kubernetes /etc/systemd/system/kubelet.service.d /etc/kubernetes/manifests/

所有节点配置 kubelet service:

cat <<EOF > /usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

[Service]

ExecStart=/usr/local/bin/kubelet

Restart=always

StartLimitInterval=0

RestartSec=10

[Install]

WantedBy=multi-user.target

EOF

2

3

4

5

6

7

8

9

10

11

12

13

14

15

所有节点配置 kubelet service 的配置文件:

[ ! -d /etc/systemd/system/kubelet.service.d ] && mkdir /etc/systemd/system/kubelet.service.d

cat <<'EOF' > /etc/systemd/system/kubelet.service.d/10-kubelet.conf

[Service]

Environment="KUBELET_KUBECONFIG_ARGS=--bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig --kubeconfig=/etc/kubernetes/kubelet.kubeconfig"

Environment="KUBELET_SYSTEM_ARGS=--runtime-request-timeout=15m --container-runtime-endpoint=unix:///run/containerd/containerd.sock"

Environment="KUBELET_CONFIG_ARGS=--config=/etc/kubernetes/kubelet-conf.yml"

Environment="KUBELET_EXTRA_ARGS=--node-labels=node.kubernetes.io/node='' "

ExecStart=

ExecStart=/usr/local/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_CONFIG_ARGS $KUBELET_SYSTEM_ARGS $KUBELET_EXTRA_ARGS

EOF

2

3

4

5

6

7

8

9

10

所有节点创建 kubelet 的配置文件:

注意:如果更改了k8s的service网段,需要更改 kubelet-conf.yml 的 clusterDNS: 配置,改成k8s Service网段的第十个地址,比如10.96.0.10

cat <<EOF > /etc/kubernetes/kubelet-conf.yml

apiVersion: kubelet.config.k8s.io/v1beta1

kind: KubeletConfiguration

address: 0.0.0.0

port: 10250

readOnlyPort: 10255

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 2m0s

enabled: true

x509:

clientCAFile: /etc/kubernetes/pki/ca.pem

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 5m0s

cacheUnauthorizedTTL: 30s

cgroupDriver: systemd

cgroupsPerQOS: true

clusterDNS:

- 10.96.0.10

clusterDomain: cluster.local

containerLogMaxFiles: 5

containerLogMaxSize: 10Mi

contentType: application/vnd.kubernetes.protobuf

cpuCFSQuota: true

cpuManagerPolicy: none

cpuManagerReconcilePeriod: 10s

enableControllerAttachDetach: true

enableDebuggingHandlers: true

enforceNodeAllocatable:

- pods

eventBurst: 10

eventRecordQPS: 5

evictionHard:

imagefs.available: 15%

memory.available: 100Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

evictionPressureTransitionPeriod: 5m0s

failSwapOn: true

fileCheckFrequency: 20s

hairpinMode: promiscuous-bridge

healthzBindAddress: 127.0.0.1

healthzPort: 10248

httpCheckFrequency: 20s

imageGCHighThresholdPercent: 85

imageGCLowThresholdPercent: 80

imageMinimumGCAge: 2m0s

iptablesDropBit: 15

iptablesMasqueradeBit: 14

kubeAPIBurst: 10

kubeAPIQPS: 5

makeIPTablesUtilChains: true

maxOpenFiles: 1000000

maxPods: 110

nodeStatusUpdateFrequency: 10s

oomScoreAdj: -999

podPidsLimit: -1

registryBurst: 10

registryPullQPS: 5

resolvConf: /etc/resolv.conf

rotateCertificates: true

runtimeRequestTimeout: 2m0s

serializeImagePulls: true

staticPodPath: /etc/kubernetes/manifests

streamingConnectionIdleTimeout: 4h0m0s

syncFrequency: 1m0s

volumeStatsAggPeriod: 1m0s

EOF

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

所有节点启动 kubelet:

systemctl daemon-reload

systemctl enable --now kubelet

systemctl status kubelet

2

3

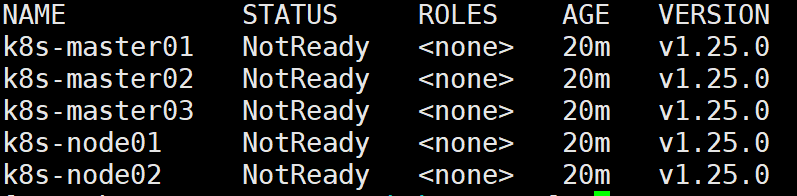

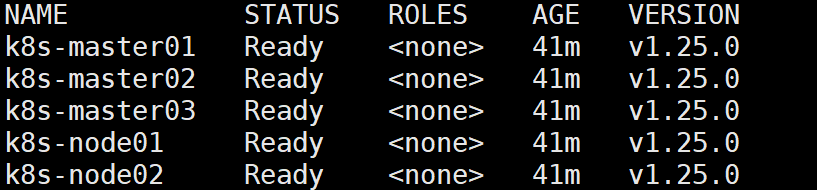

查看集群状态:

如果是networkReady状态异常,因为还没有安装Calico,可暂时忽略;

kubectl get node

# kube-proxy 配置

注意:如果不是高可用集群,192.168.7.10:8443改为 master01 的地址,8443改为 apiserver 的端口,默认是6443。

k8s-master01 节点执行:

cd /root/k8s-ha-install

kubectl -n kube-system create serviceaccount kube-proxy

kubectl create clusterrolebinding system:kube-proxy --clusterrole system:node-proxier --serviceaccount kube-system:kube-proxy

SECRET=$(kubectl -n kube-system get sa/kube-proxy \

--output=jsonpath='{.secrets[0].name}')

JWT_TOKEN=$(kubectl -n kube-system get secret/$SECRET \

--output=jsonpath='{.data.token}' | base64 -d)

PKI_DIR=/etc/kubernetes/pki

K8S_DIR=/etc/kubernetes

kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/pki/ca.pem --embed-certs=true --server=https://192.168.7.10:8443 --kubeconfig=${K8S_DIR}/kube-proxy.kubeconfig

kubectl config set-credentials kubernetes --token=${JWT_TOKEN} --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

kubectl config set-context kubernetes --cluster=kubernetes --user=kubernetes --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

kubectl config use-context kubernetes --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

# 解决 User "system:anonymous" 的问题

kubectl create clusterrolebinding the-boss --user system:anonymous --clusterrole cluster-admin

2

3

4

5

6

7

8

9

10

11

12

13

14

15

k8s-master01 节点执行,将 kubeconfig 发送至其他节点:

for NODE in k8s-master02 k8s-master03; do

scp /etc/kubernetes/kube-proxy.kubeconfig $NODE:/etc/kubernetes/kube-proxy.kubeconfig

done

for NODE in k8s-node01 k8s-node02; do

scp /etc/kubernetes/kube-proxy.kubeconfig $NODE:/etc/kubernetes/kube-proxy.kubeconfig

done

2

3

4

5

6

所有节点添加 kube-proxy 的配置和 service 文件:

cat <<EOF > /usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kubernetes Kube Proxy

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-proxy \\

--config=/etc/kubernetes/kube-proxy.yaml \\

--v=2

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

EOF

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

如果更改了集群Pod网段,需要更改 kube-proxy.yaml 的 clusterCIDR 为自己的Pod网段:

cat <<EOF > /etc/kubernetes/kube-proxy.yaml

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 0.0.0.0

clientConnection:

acceptContentTypes: ""

burst: 10

contentType: application/vnd.kubernetes.protobuf

kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig

qps: 5

clusterCIDR: 172.16.0.0/12

configSyncPeriod: 15m0s

conntrack:

max: null

maxPerCore: 32768

min: 131072

tcpCloseWaitTimeout: 1h0m0s

tcpEstablishedTimeout: 24h0m0s

enableProfiling: false

healthzBindAddress: 0.0.0.0:10256

hostnameOverride: ""

iptables:

masqueradeAll: false

masqueradeBit: 14

minSyncPeriod: 0s

syncPeriod: 30s

ipvs:

masqueradeAll: true

minSyncPeriod: 5s

scheduler: "rr"

syncPeriod: 30s

kind: KubeProxyConfiguration

metricsBindAddress: 127.0.0.1:10249

mode: "ipvs"

nodePortAddresses: null

oomScoreAdj: -999

portRange: ""

udpIdleTimeout: 250ms

EOF

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

所有节点启动 kube-proxy:

systemctl daemon-reload

systemctl enable --now kube-proxy

systemctl status kube-proxy

2

3

# 安装Calico

# 安装官方推荐版本

k8s-master01 节点执行:

cd /root/k8s-ha-install/calico/

更改 calico 的网段,主要需要将红色部分的网段,改为自己的Pod网段:

sed -i "s|POD_CIDR|172.16.0.0/12|g" calico.yaml

检查网段是是否已经更改为自己的Pod网段:

grep "IPV4POOL_CIDR" calico.yaml -A 1

安装calico:

kubectl apply -f calico.yaml

此时再次验证节点,发现都已经正常了:

kubectl get node

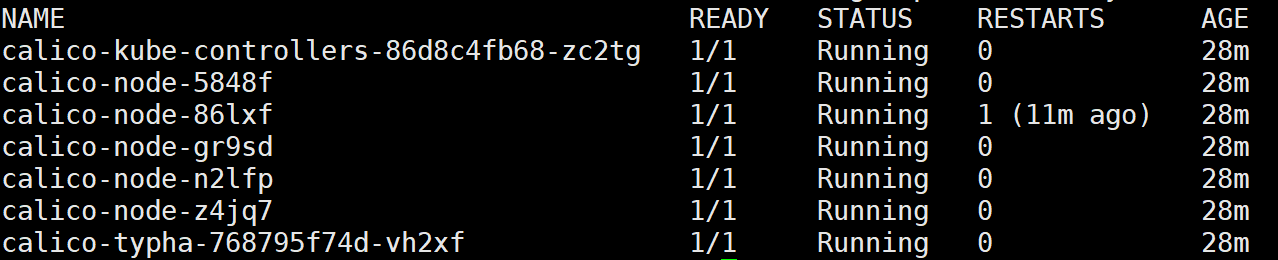

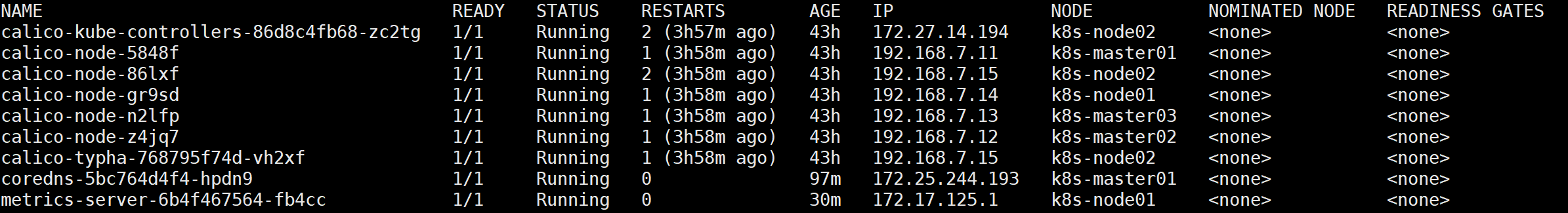

查看容器状态:

kubectl get po -n kube-system

如果容器状态异常可以使用 kubectl describe 或者 kubectl logs 查看容器的日志。

# 安装CoreDNS

# 安装官方推荐版本

k8s-master01 节点执行:

cd /root/k8s-ha-install/

# 如果更改了k8s service的网段需要将coredns的serviceIP改成k8s service网段的第十个IP

COREDNS_SERVICE_IP=`kubectl get svc | grep kubernetes | awk '{print $3}'`0

sed -i "s#KUBEDNS_SERVICE_IP#${COREDNS_SERVICE_IP}#g" CoreDNS/coredns.yaml

2

3

4

安装 coredns:

kubectl create -f CoreDNS/coredns.yaml

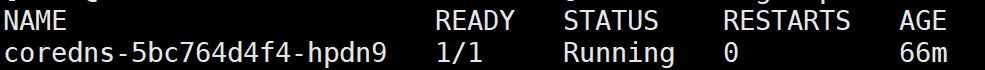

查看容器状态:

kubectl get po -n kube-system -l k8s-app=kube-dns

# 安装 Metrics Server

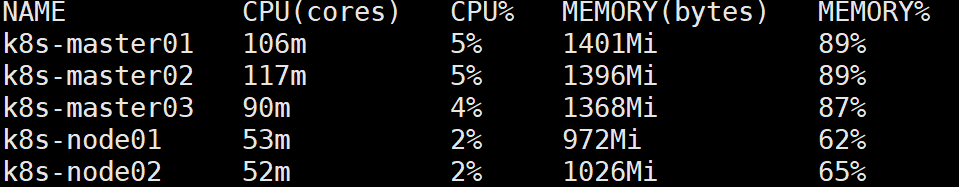

在新版的 Kubernetes 中系统资源的采集均使用 Metrics-server ,可以通过 Metrics 采集节点和Pod的内存、磁盘、CPU和网络的使用率。

k8s-master01 节点执行:

cd /root/k8s-ha-install/metrics-server

kubectl create -f .

2

等待 metrics server 启动然后查看状态 :

kubectl top node

# 集群验证

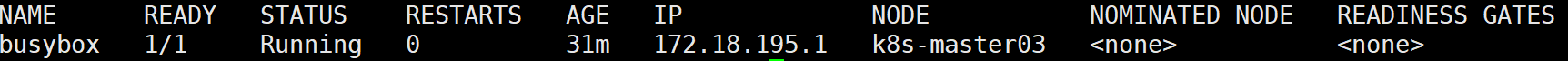

安装 busybox:

cat<<EOF | kubectl apply -f -

apiVersion: v1

kind: Pod

metadata:

name: busybox

namespace: default

spec:

containers:

- name: busybox

image: busybox:1.28

command:

- sleep

- "3600"

imagePullPolicy: IfNotPresent

restartPolicy: Always

EOF

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

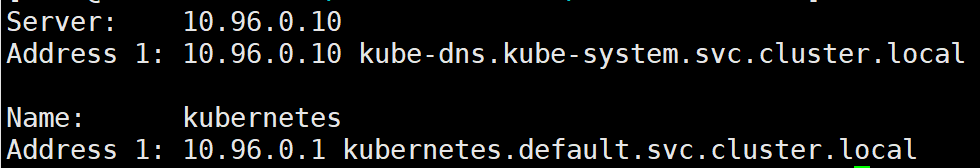

# Pod 必须能解析 Service

kubectl exec busybox -n default -- nslookup kubernetes

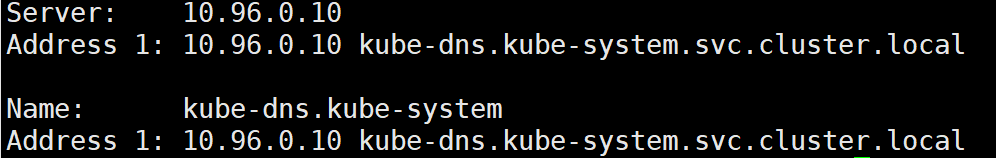

# Pod 必须能解析跨 namespace 的 Service

kubectl exec busybox -n default -- nslookup kube-dns.kube-system

# 每个节点都必须要能访问 Kubernetes 的 kubernetes svc 443和 kube-dns 的service 53

kubectl get svc # CLUSTER-IP 为Kubernetes service地址

kubectl get svc -n kube-system | grep kube-dns # CLUSTER-IP 为 kube-dns service地址

2

所有节点执行:

telnet 10.96.0.1 443

telnet 10.96.0.10 53

2

端口通则表示无问题。

# Pod和Pod之间要能通信

kubectl get pod -n kube-system -owide

# 同namespace能通信

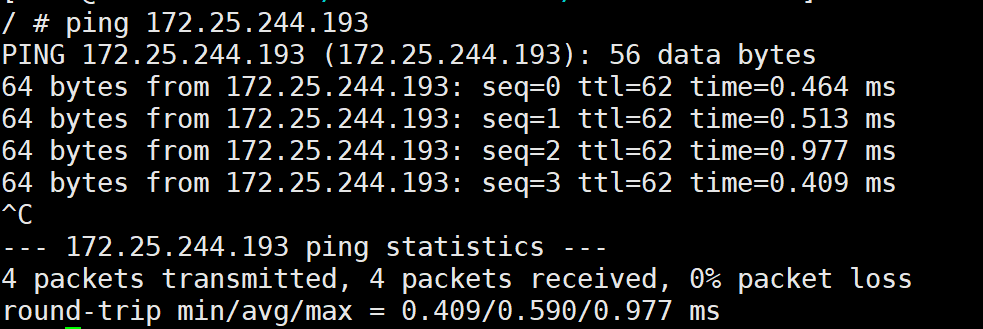

选取同 namespace 的两个容器,进入执行 ping 命令看是否能通。

# 跨namespace能通信

选取不同 namespace 的两个容器,进入执行 ping 命令看是否能通:这里以刚才安装的 busybox 为例:

kubectl get pod -owide

kubectl exec -it busybox -- sh # 进入容器执行sh

选取一个其他 namespace 的 ip 进行测试:

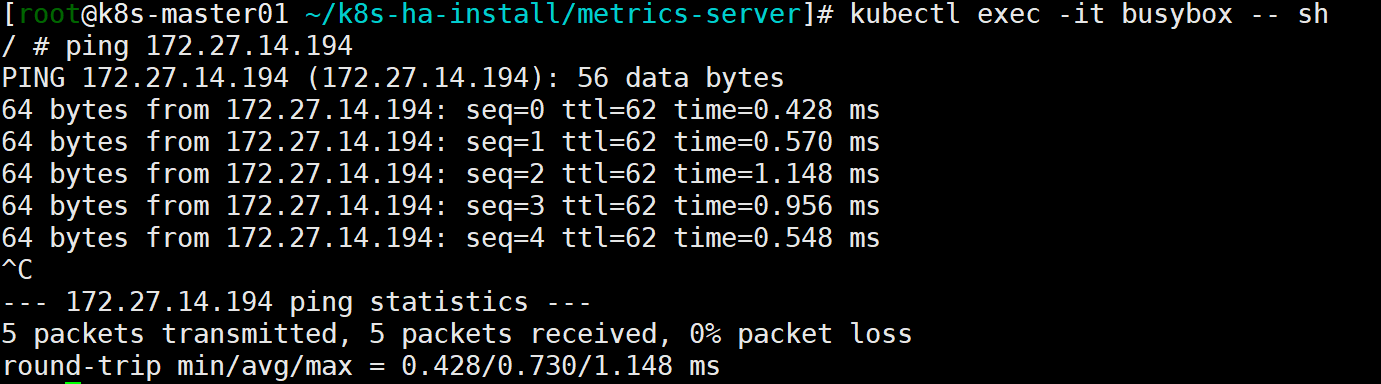

# 跨机器能通信

选取一个其他节点的 ip 进行测试:

到此集群基本上验证完毕。

# 安装dashboard

# 安装指定版本dashboard

cd /root/k8s-ha-install/dashboard/

# 安装dashboard

kubectl create -f dashboard.yaml

# 创建token

kubectl create -f dashboard-user.yaml

2

3

4

5

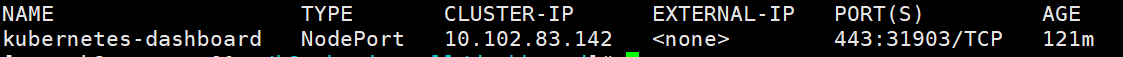

查看端口:

kubectl get svc kubernetes-dashboard -n kubernetes-dashboard

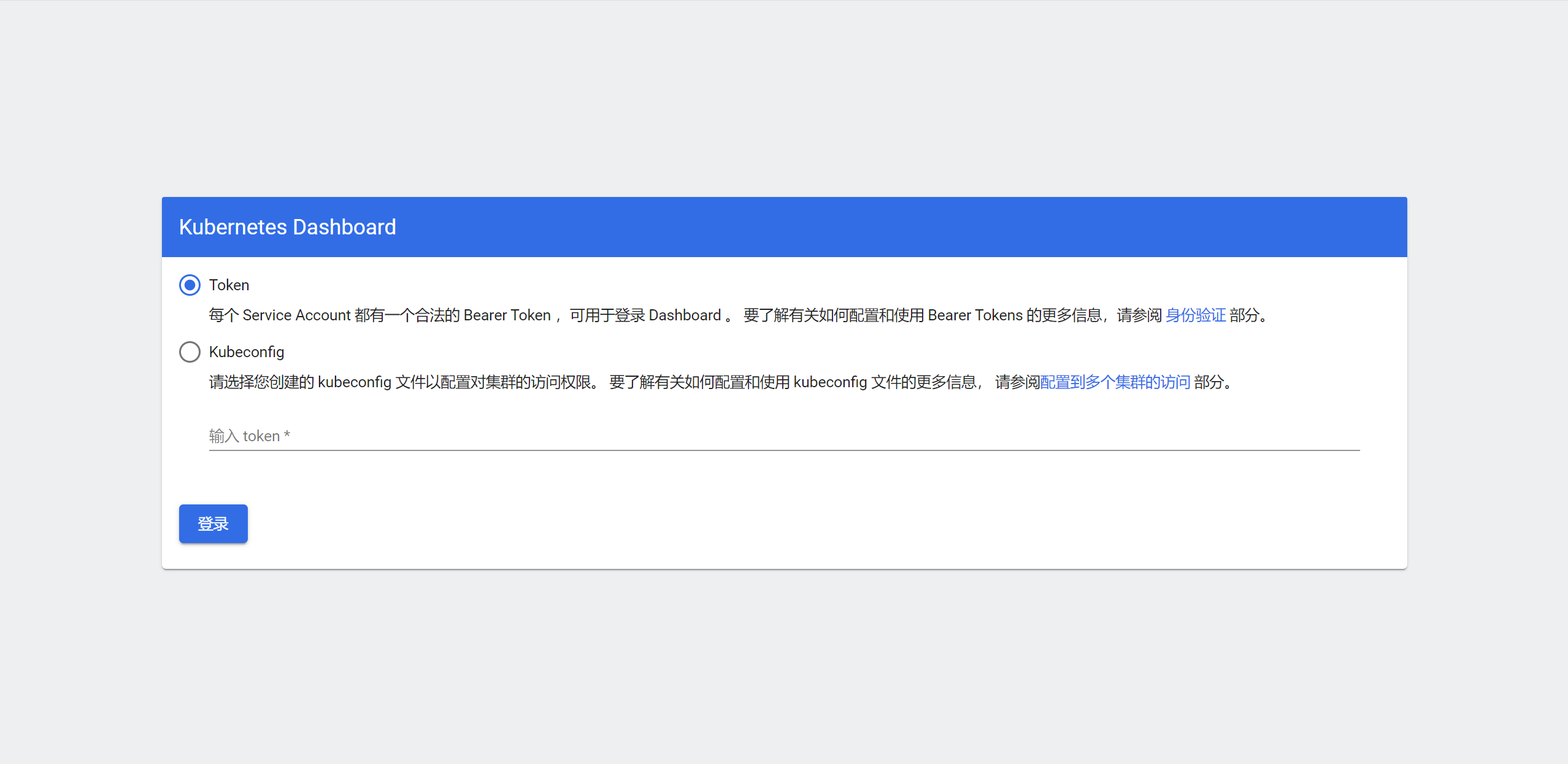

根据自己的实例端口号,通过任意安装了 kube-proxy 的宿主机的IP+端口即可访问到 dashboard。访问Dashboard:https://192.168.7.10:30287/#/login,选择登录方式为 token :

k8s-master01 节点创建 dashboard token :

kubectl -n kube-system create token admin-user

配置kubectl命令自动补全:kubectl 备忘单 (opens new window)

source <(kubectl completion bash) # 在 bash 中设置当前 shell 的自动补全,要先安装 bash-completion 包。

echo "source <(kubectl completion bash)" >> ~/.bashrc # 在你的 bash shell 中永久地添加自动补全

2